Models

Below is a list of popular OSS models that you can query instantly or deploy on dedicated hardware with Predibase. The models are available via our UI Playground, Python SDK, or REST API.

Inference on our serverless models is billed by token. See pricing

Serverless Endpoints

| Deployment Name | Parameters | Architecture | License | Context Window (Max Tokens) | Always On |

|---|---|---|---|---|---|

| llama-3-8b | 8 billion | Llama-3 | Meta (request for commercial use) | 8192 | Yes |

| llama-3-8b-instruct | 8 billion | Llama-3 | Meta (request for commercial use) | 8192 | Yes |

| llama-3-70b | 70 billion | Llama-3 | Meta (request for commercial use) | 8192 | Yes |

| llama-3-70b-instruct | 70 billion | Llama-3 | Meta (request for commercial use) | 8192 | Yes |

| mistral-7b | 7 billion | Mistral | Apache 2.0 | 8000 | Yes |

| mistral-7b-instruct | 7 billion | Mistral | Apache 2.0 | 8000 | Yes |

| mistral-7b-instruct-v0-2 | 7 billion | Mistral | Apache 2.0 | 8000 | Yes |

| mixtral-8x7b-v0-1 | 46.7 billion | Mixtral | Apache 2.0 | 32768 | Yes |

| mixtral-8x7b-instruct-v0-1 | 46.7 billion | Mixtral | Apache 2.0 | 32768 | Yes |

| zephyr-7b-beta | 7 billion | Mistral | MIT | 8000 | Yes |

| llama-2-7b | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 | Yes |

| llama-2-7b-chat | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 | Yes |

| llama-2-13b | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| llama-2-13b-chat | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| llama-2-70b | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| llama-2-70b-chat | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| codellama-7b | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 | Yes |

| codellama-7b-instruct | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| codellama-13b-instruct | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 | Yes |

| codellama-70b-instruct | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 | No |

| gemma-2b | 2.5 billion | Gemma | 8192 | No | |

| gemma-2b-instruct | 2.5 billion | Gemma | 8192 | No | |

| gemma-7b | 8.5 billion | Gemma | 8192 | No | |

| gemma-7b-instruct | 8.5 billion | Gemma | 8192 | No | |

| phi-2 | 2.7 billion | Phi-2 | MIT | 2048 | No |

| phi-3-mini-4k-instruct | 3.8 billion | Phi-3 | MIT | 4096 | No |

Note: Models that are not always on scale down to 0 and may have a brief spin up time before serving requests. If you would like us to add support for any serverless endpoints or make any existing endpoints always on, please get in touch on Discord.

Dedicated Deployments

While popular models can be prompted via serverless endpoints, Predibase also offers the ability to spin up deployments on dedicated hardware for nearly any open-source model available. These models fall into two categories:

- Available LLMs: These are models we have first-class support for. These have been verified and are ensured to work well.

- Best-Effort LLMs: These are models that have not been verified and may occasionally not deploy as expected.

Available LLMs

| Name | Parameters | Architecture | License | Context Window (Max Tokens) |

|---|---|---|---|---|

| llama-3-8b | 8 billion | Llama-3 | Meta (request for commercial use) | 8192 |

| llama-3-8b-instruct | 8 billion | Llama-3 | Meta (request for commercial use) | 8192 |

| llama-3-70b | 70 billion | Llama-3 | Meta (request for commercial use) | 8192 |

| llama-3-70b-instruct | 70 billion | Llama-3 | Meta (request for commercial use) | 8192 |

| mistral-7b | 7 billion | Mistral | Apache 2.0 | 8000 |

| mistral-7b-instruct | 7 billion | Mistral | Apache 2.0 | 8000 |

| mistral-7b-instruct-v0-2 | 7 billion | Mistral | Apache 2.0 | 8000 |

| mixtral-8x7b-v0-1 | 46.7 billion | Mixtral | Apache 2.0 | 32768 |

| mixtral-8x7b-instruct-v0-1 | 46.7 billion | Mixtral | Apache 2.0 | 32768 |

| zephyr-7b-beta | 7 billion | Mistral | MIT | 8000 |

| llama-2-7b | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| llama-2-7b-chat | 7 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| llama-2-13b | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| llama-2-13b-chat | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| llama-2-70b | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| llama-2-70b-chat | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| codellama-13b-instruct | 13 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| codellama-70b-instruct | 70 billion | Llama-2 | Meta (request for commercial use) | 4096 |

| gemma-2b | 2.5 billion | Gemma | 8192 | |

| gemma-2b-instruct | 2.5 billion | Gemma | 8192 | |

| gemma-7b* | 8.5 billion | Gemma | 8192 | |

| gemma-7b-instruct* | 8.5 billion | Gemma | 8192 | |

| phi-2 | 2.7 billion | Phi | MIT | 2048 |

| phi-3-mini-4k-instruct | 3.8 billion | Phi-3 | MIT | 4096 |

*Gemma-7b models are not available to deploy in the Developer tier since an A10G is not able to support its requirements effectively.

Best-effort LLMs

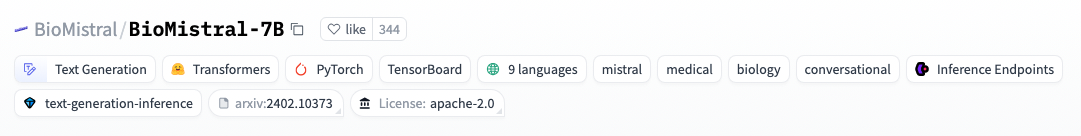

Predibase provides best-effort support for any Huggingface LLM meeting the following criteria:

- Uses one of the supported LoRAX architectures

- Has the "Text Generation" and "Transformer" tags

- Does not have a "custom_code" tag

How to Deploy a Custom LLM

- Get the Huggingface ID for your model by clicking the the copy icon on the custom base model's page, ex. "BioMistral/BioMistral-7B".

- Pass the Huggingface ID as the

base_model, the appropriate accelerator ID foracceleratorbased on your tier or contract, andhf_token(your Huggingface token) if deploying a private model.

pb.deployments.create(

name="my-biomistral-7b",

config=DeploymentConfig(

base_model="BioMistral/BioMistral-7B",

accelerator="a10_24gb_100", # Required for custom models

# hf_token="<YOUR HUGGINGFACE TOKEN>" # Required for private Huggingface models

# cooldown_time=3600 # Value in seconds, defaults to 0 which means deployment is always on

)

)

- Prompt your adapter as normal.

Instruction Templates

The following instruction templates are used in the UI when prompting our serverless deployments. When using the SDK or REST API for inference, you will need to include these templates yourself in the prompt, otherwise you may see less than stellar responses.

Llama 3 models

Instruct models

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

You are a helpful, detailed, and polite artificial intelligence assistant. Your answers are clear and suitable for a professional environment.

If context is provided, answer using only the provided contextual information.<|eot_id|><|start_header_id|>user<|end_header_id|>

<insert your prompt here><|eot_id|><|start_header_id|>assistant<|end_header_id|>\n\n

Non-instruct models

None

Llama 2 models

Chat models

<<SYS>>

You are a helpful, detailed, and polite artificial intelligence assistant. Your answers are clear and suitable for a professional environment.

If context is provided, answer using only the provided contextual information.

<</SYS>>

[INST] <insert your prompt here> [/INST]

Non-chat models

None

Codellama models

codellama-13b-instruct

<s>[INST] <insert your prompt here> [/INST]

codellama-70b-instruct

<s>Source: user\n\n <insert your prompt here> <step> Source: assistant\nDestination: user\n\n

Mistral & Mixtral models

<<SYS>>

You are a helpful, respectful and honest assistant. Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.

If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information.

<</SYS>>

[INST] <insert your prompt here> [/INST]

Gemma models

Instruct models

<start_of_turn>user

<insert your prompt here><end_of_turn>

<start_of_turn>model

Non-instruct models

None

Phi-2

<|im_start|>user\n<insert your prompt here><|im_end|>\n

Zephyr-7b-beta

<|system|>

You are a helpful, respectful and honest assistant. Always answer as helpfully as possible, while being safe. Your answers should not include any harmful, unethical, racist, sexist, toxic, dangerous, or illegal content. Please ensure that your responses are socially unbiased and positive in nature.

If a question does not make any sense, or is not factually coherent, explain why instead of answering something not correct. If you don't know the answer to a question, please don't share false information.</s>

<|user|>

<insert your prompt here></s>

<|assistant|>